FCC allows big ISPs to add performance enhancing juice to speed tests, WSJ says

![Pixabay [CC0] Syringe](https://www.tellusventure.com/images/2019/syringe.jpg)

The fast, reliable broadband service claims endorsed by the Federal Communications Commission are based on test data that’s been doctored by California’s monopoly model Internet service providers, according to a Wall Street Journal article Shalini Ramachandran, Lillian Rizzo and Drew FitzGerald (h/t to Jim Warner for sending me the link).

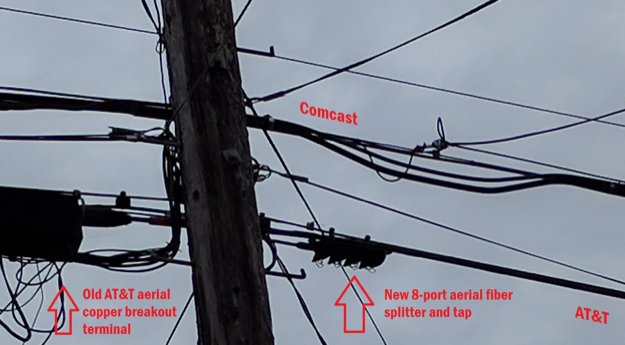

Annual speed measurements taken to evaluate U.S. broadband service are “juiced” by AT&T, Comcast, Charter Communications and others, who know ahead of time where the tests are run and afterwards lobby the FCC to suppress bad results and hype good ones, the story says…

… More[AT&T] pushed the Federal Communications Commission to omit unflattering data on its DSL internet service…

In the end, the DSL data was left out of the report released late last year, to the chagrin of some agency officials.